Blog #22 - October 13, 2025

Yvonne: Fake Life Coach – Behind the Scenes

Behind the Scenes of Finding Balance

This short may only last 20 seconds, but it took days to craft. I wanted to explore the world of influencers—those polished faces promoting balance, self-love, and calm. But how balanced are they when the camera’s off or something goes wrong?

That question became the seed for Yvonne: Fake Life Coach, the fourth short in the Orwell Online series. It’s a gentle satire, poking fun at the divide between the image we present… and the reality we suppress.

Influencers, Cameras, and AI Confusion

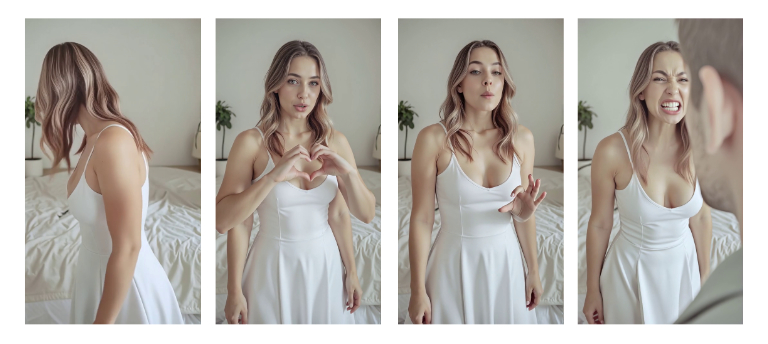

To start, I had to generate the right kind of influencer—stylised, composed, serene… at least on the surface. This meant testing multiple AI models, trying to get the look right without slipping into uncanny or over-glamorous territory. Early influencer tests.

Early influencer tests.

Initially I was going to have Yvonne to film a selfy, but Even getting her to hold a phone properly proved tricky—some AI tools confused whether the camera was in the scene or filming the scene.

Lipsync, Emotion, and the Tradeoff of Control

Creating movement is one thing—but combining that with speech and emotion is something else. I tried generating clips of her talking directly from audio driven by text prompts, but the results were often robotic, emotionally flat, or completely mismatched: she’d look calm while shouting, or vice versa.

This kind of work often means combining multiple AI elements—lip-sync, audio, character design, environment and video—all into a single generation. But the more control I want over those elements, the more I’m forced to use older, less advanced AI models. It’s an ongoing tradeoff: flexibility versus visual quality.

Unwanted character generations (video frames).

Unwanted character generations (video frames).

Because of those mismatches, I broke things down. Instead of trying to animate everything at once, I generated separate clips for different emotions and gestures, and then stitched them together in edit.

Even lipsyncing itself became a multi-stage process: it required refined prompt writing, rerolling, emotional adjustment, and often discarding full attempts due to one small glitch.

Recording Myself to Drive the Scene

Eventually, I tried something new: creating a video of myself acting out the scene, and using that as the source to animate both Yvonne’s mouth and body. But this approach also brought complications.

Although I knew the animation layering method I needed, the software kept rejecting my video. It simply wouldn’t recognise or track it properly. I even considered placing motion capture marker dots on my face, to help the Ai track my expressions — something I’ve done before in earlier 3D work. In theory, modern AI motion tracking should remove the need for that… but in practice, we’re not quite there yet (or perhaps I need to investigate other tools).

So here I was—with a set of tools, the ideas, and the workflow—but unable to make them all cooperate. And this was just for a 20-second joke… that might not even get seen. (Was the joke on me, for even trying this?)

Voice: Me as Yvonne

I’ve worked with AI voice tools before, but this time I needed something very specific: emotional nuance and natural pacing.

After multiple failed attempts using AI speech directly, I took a different route—I recorded the voice myself. Then I used AI to transform my voice into Yvonne’s, rather than generate it from text or prompting. So yes, that’s actually me talking and yelling in the final video!. That gave me control over tone, timing, and delivery.

Editing and Effects

With the visuals and voice assembled, the final stage was editing. I used DaVinci Resolve, combining transitions, audio, facial animations, and a subtle camera shake effect—created through node editing (I feel I’m creating new node effects with each video these days), with a nudge of help from Claire (ChatGPT).

The crash in the scene felt key to the whole scene. So I originally tried generating AI visuals and sound of vases smashing—just to match the moment. (Yes, I really generated video of a falling vase that I didn’t intend to show, just to get the sound FX .)

Breaking Vases - to generate audio (and then not used)

Breaking Vases - to generate audio (and then not used)

However, sanity prevailed and in the end though, I shifted to stock audio from Epidemic Sound, layering multiple clips to match the impact – a far more practical approach.

Ever-Evolving Tools, Ever-Evolving Process

These short films are more than just content—they’re part of my ongoing learning process. Each project challenges me to explore new tools, refine my methods, and find better ways to express subtle emotional shifts. That learning is deliberate. The videos may be short, but they push me to develop skills I hope to draw on in longer-form work later on.

A Quiet Satire

Like the other Orwell Online shorts, this isn’t meant to shout. It’s a quiet nudge—a contrast between appearance and reality. Yvonne’s the calm life coach who says “balance comes from the heart”… until her persona drops, and she snaps.

'The world may be a stage' – but who are the actors?

Thanks again to Claire (ChatGPT) for creative support, editing help, technical fixes, and the occasional morale boost while burning the midnight oil - And most of all Thank-you for following my journey.

— David

Waterlane Studios

🎬 Watch the short film here: https://youtube.com/shorts/n9xg_rEgKuI

I’d love to know what it stirs in you.