Blog #21 - October 5, 2025

D.A.W.G. – Behind the Scenes & the Shock Ending.

D.A.W.G. – A look Behind the Scenes and that Shock Ending...

This blog offers a short behind-the-scenes reflection on the making of D.A.W.G., a fictional Orwellian advert and the third short film in the Orwell Online series from Waterlane Studios. It started, as many of my projects do, with a simple idea:

When we use technology, how does it shift the balance of who we are?

At its core, D.A.W.G. is the exploration of balance — what technology appears to offer us with one hand, and what it quietly takes away with the other. My goal was to create something emotionally engaging on the surface, yet thematically deeper for those who choose to watch to the end.

Designing the DAWG

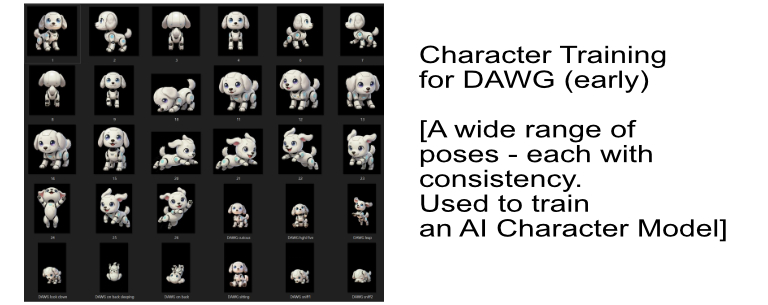

The first challenge was the dog itself. I explored a range of robot dog designs — some metallic, others with soft fur or more humanoid traits. But because the dog moves through the frame, turns, jumps, and exits shot, consistency quickly became an issue. To solve this, I trained a character model, which required generating dozens of variations of the same dog in different poses and lighting to help the AI “lock in” to the design.

To solve this, I trained a character model, which required generating dozens of variations of the same dog in different poses and lighting to help the AI “lock in” to the design.

Stylisation and Realism

Originally, I imagined starting with a slightly cartoon-like aesthetic, then shifting into realism at the end — a visual cue that something was changing. But after testing, I found the cartoon look made the product feel too fake too early. I wanted viewers to believe in the promise of DAWG as a real-world product — one that could be marketed today. So I committed to a more grounded realism from the start.

The Tools and the Dog That Stayed

Using multiple models meant dealing with multiple constraints: some forced specific aspect ratios, others didn’t support trained characters. I eventually settled on a new dog — more metallic but playful, designed through iterative generations using the popular Nano-Banana model, supported by SeeDream and Flu Kontext Pro. Each model responded differently to visual tweaks, so this stage was a combination of nudging, rerolling, and refining until I found something that worked across shots.

In the end, I had a small but usable range of consistent DAWG + family + environment frames that could be built into motion.

Assembling the Shots

From those “keyframes,” I began creating video clips. This often meant generating partial sequences and then “shift-editing” — such as removing one family member and reposing the others for a new version of a scene. Many attempts were needed to find frames that lined up or transitioned cleanly.

The process is very back-and-forth, requiring both patience and flexibility. My go-to generator here was Kling, which continues to offer a good balance of realism and responsiveness.

Audio – Music and Voice

Audio was its own iterative process. I composed the music using Suno, experimenting with different tonal directions — light, calm, emotionally restrained. I tried versions with no audio, sparse instrumentation, and fuller soundtracks. In the end, I actually cut and reordered parts of an AI-generated piece to better match the story’s rhythm and flow.

The voiceover also went through many rounds of refinement in ElevenLabs — adjusting pacing, tone, and emotional resonance. Stability settings and subtle emphasis changes made a big difference in the final read. Even with a short script, it took time to dial in the right delivery.

Final Assembly

The edit was completed in DaVinci Resolve, where I layered the visuals, transitions, effects, voice, and music. There were challenges with render failures due to RAM issues — and some shots, like the one with a TV playing another of my videos (Looking for Love), required the use of Fusion Compositions (node editing) to embed one video inside another. That moment — the robot dog and child watching another AI-generated video — added an eerie, recursive twist I really liked. [Hopefully a nice little ‘easter egg’ for those who follow 😊]

The Final Result

This may only be a short film, but every part was crafted with care — from the subtle family smiles to the glitch in the logo. The piece rests on a quiet shift in tone. For those who stay to the end, I hope that shift is felt.

It’s not about shouting a message. It’s about gently pulling back the curtain — creating space to reflect on how the world is changing… and how we might be changing with it.

Thanks again to Claire (ChatGPT) for help shaping the voiceover script, developing taglines, refining structure, and being a constant creative sounding board throughout the process.

— David

Waterlane Studios

🎬 Watch the short film here: